.svg)

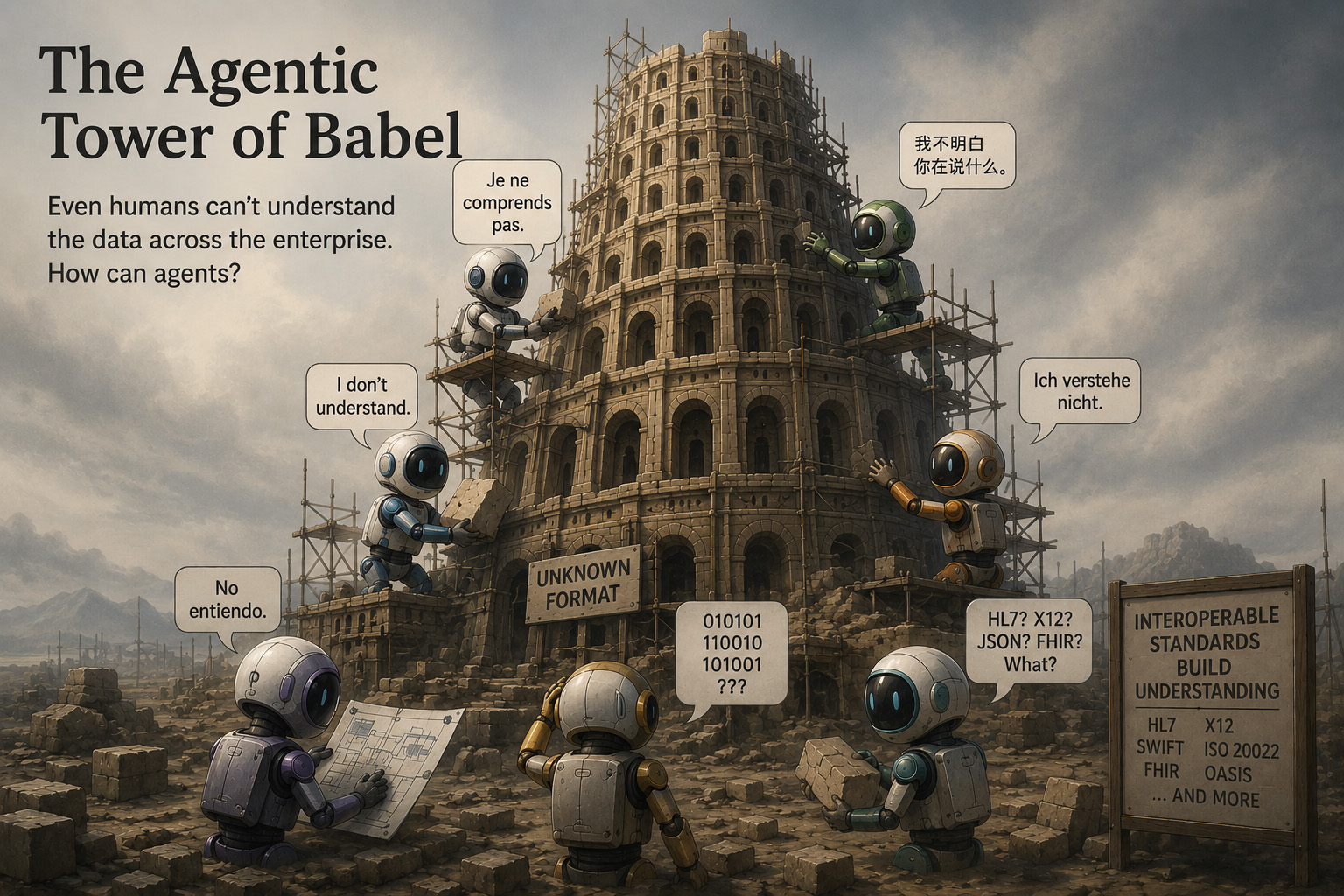

The story of the Tower of Babel recounts how a group of people suddenly weren't able to work with one another because they all spoke different languages. That's happening to agents today.

McKinsey and Gartner both point to hundreds of millions of dollars lost each year because people within organizations can't find and accurately interpret enterprise data. Even our most experienced employees struggle in this area —yet we think agents will succeed?

Human language is wonderfully, yet frustratingly, nuanced. As a result, humans have become adept at inferring meaning from even lacking context. A conversation about constructing a building may reference many types of blueprints: electrical, foundation, even landscaping. Yet somehow humans keep up, intuitively knowing that if the discussion is about plants, the "blueprint" in question is the landscape plan.

Agents aren't as smart.

Agents must pick the right agents and tools to call using simple narrative descriptions, looking for vector similarities between those narratives, the system prompt, the user prompt, and the agent's memory. This is the equivalent of agentic Babel.

Is the date found in a document the same as the `order_date` the tool requires? Is it formatted the same? Are the timestamps in the same time zone? Are the units the same on the measurement? Is the order processing agent for incoming or outgoing orders?

These are left to interpretation by the LLM using probabilistic matching. Even worse, the answer may be different the next time the agent is called because the context may be different.

Research shows that even given a small number of tools to select from, LLMs do not reliably:

That's not a foundation you can build an enterprise on.

I've been reading, with interest, a number of articles promoting ontologies as a way to improve agent discovery. In principle, I agree completely. Agents need a standard vocabulary for defining capabilities.

Note the word "standard". That's intentional and that's where the problem with most ontology approaches lies.

A privately-built ontology, no matter how well executed, has an interoperability defect that will become a barrier to value.

To realize the value proposition of agentic AI requires that agents, tools, and resources be reliably discovered. That's why you started your ontology. The issues are that your ontology is grounded on how they are used today and, even worse, your ontology doesn't match that of any other organization preventing interoperability.

If this makes you uncomfortable about agents, it should.

We wouldn't design human processes with these gaps, so why would we architect agents this way? Agents need consistent, machine readable structure.

Ever since the advent of computers, there has been a need to exchange data between systems and organizations. Countless hundreds of thousands of hours over decades have been spent on this problem resulting in a rich set of well-established standards such as HL7 and FHIR for healthcare, X12 for general EDI, SWIFT for banking, FIBO for financial concepts, GS1 for product identification, SNOMED CT for clinical terms, etc.

These standards define the business transactions, the data meaning, and the syntax. They are public. They are versioned. They are maintained by neutral standards bodies with heavy industry participation. They are already referenced by regulators. They already mean the same thing across organizations.

Other standards layer on top. NAICS, ISIC, NACE classify industries. APQC's Process Classification Framework defines cross-industry and industry-specific processes. eTOM does the same for telecom, BIAN for banking, SCOR for supply chain, ACORD for insurance, ISA-95 for manufacturing.

Together, these provide a rich and unambiguous vocabulary for describing processes, data, and capabilities. Where gaps exist, they can be filled using API definitions and extensions rather than within the structure.

This doesn't mean agents should exchange these fully defined transaction payload. it simply means that we borrow from the definition of meaning and syntax as a common vocabulary for their definition per element.

Creating the structure for agents requires four things to be defined in a shared, machine-readable form grounded in industry standards:

Each of these fails differently when left to free-form narrative. Together, they form the substrate that agent interoperability stands on.

Each of these has historically been documented in independently written prose: no consistency, no structure, no grounding in accepted terminology. That is a foundation of ambiguity, not enterprise-grade interoperability.

Once capabilities and processes are grounded, discovery becomes the runtime question: given what I need, who can do it in the way I require?And that question must be answered across federation boundaries: hosting, agentic frameworks, models, vendors, organizations.

Functional matching is only part of the answer. Discovery must also fully support non-functional characteristics:

These are first-class discovery criteria, not metadata footnotes. Using an agent, tool, or resource without consideration of these requirements can result in breaches, cost overruns, regulatory exposure, or other undesirable consequences. An agent that satisfies the functional requirement but violates the compliance requirement is not a match — it's a liability.

Discovery without a shared vocabulary collapses into keyword search. The model picks the most lexically similar agent, not necessarily the right one. The resulting workflow looks plausible, causing silent errors that propagate downstream. Discovery must be deterministic. Determinism requires shared meaning, not similar phrasing.

As discussed, schemas define syntax but do nothing to define the meaning of data in the context of the actions being taken. Today, agents use similarity matching against memory, prompt context, and retrieved data to determine what maps to the inputs and outputs of agents, tools, and resources. This is the McKinsey and Gartner core finding — data misuse costing billions —now reproduced at machine speed.

You wouldn't condone a developer exchanging data with an API "because it looked like it matched." You would expect the developer to research and understand the data requirements before use. We must require agents to do the same. That requires us to provide them with the structure to do so. Without grounded data semantics, every agent-to-agent handoff is a developer skipping the research step. At human speed, that pattern produced the loss figures McKinsey and Gartner cite. At agent speed, with no human in the loop, the same pattern will produce them faster.

The Semantic Agent Discovery and Attribution Registry (SADAR) isan open specification under the Community Specification License 1.0, publishedas part of OpenSemantics.org.

For discovery and process definitions, SADAR specifies a registry of entities(publishers and owners of content) and entries (agents, tools, andresources). Every registry entry is described via a manifest that is mappedinto a standards-based taxonomy:

Manifests not only define, in industry-standard terms, the entrythey represent — they are signed by the publisher, verifying provenance and integrity. Manifests carry the signing key, allowing for validation of that integrity. The role of the manifest in security, authentication, and authorization is beyond the scope of this article, but can be found at OpenSemantics.org.

SADAR's design choice is to make these vocabularies — accessed as Internationalized Resource Identifiers (IRIs) from authoritative sources — the grounding substrate for agent manifests. An agent doesn't declare "I perform underwriting." It declares that it performs one or more processes identified in APQC PCF, operates over data fields defined in FIBO and SWIFT, and expects prerequisites that other industry-grounded agents will recognize. For more technical, role-level work, SADAR can reference O*NET task definitions as a complementary taxonomy.

This raises a critical point. SADAR is not limited to any specific set of standards. The standards, at all levels, create grounded namespaces. NAICS can be augmented by an international industry taxonomy. Process classifications can be extended where industry standards are silent. And SADAR recognizes that as robust as the standards are, they don't cover every capability required. In those cases, the standards should be extended, not overridden, using theAPI and data integration standards of critical systems. Widely adopted platforms such as Salesforce and ServiceNow can be represented directly via their own published namespaces, anchored back to the standards taxonomy.

The principle is consistent throughout: ground where a standard exists, extend where one doesn't, and never invent in private when a public anchor is available.

Since both requesters and services express their requirements and capabilities via manifests, matching becomes a deterministic intersection. Mandatory requirements require exact matches. Non-functional requirements allow for overlaps and ranges such as pricing tiers, response-time bands, certifications held, etc.

This matching is bilateral. A service can define mandatory requirements of its callers. That's useful for things like ensuring consistency of compliance frameworks across a process flow, where any non-compliance makes the entire flow non-compliant.

This is the difference between discovery that works atfederation scale and discovery that doesn't.

Interoperability is not a feature you bolt on. It is a property that the product of architectural choices made early.

Two agents built in different organizations, on different clouds, on top of different model providers, in different programming languages, will interoperate if, and only if, they share semantic anchors. They will not interoperate because they share an HTTP transport, a JSON schema, or a function-calling convention. Those are necessary plumbing, not interoperability.

This is the basis of all industry standards. While transport and protocol matter, the true interoperability lies in global agreement on the intent of the integration with clear expectations as to where it lies in a macro process and what each data element definitively represents in terms of that intent.

Agents are the next iteration of the same problem, with added complexity: the integrations aren't statically defined. They are discovered at runtime by an autonomous agent. Unlike prior integrations, agent discovery doesn't have the luxury of a static design review, human oversight, or unit and acceptance testing. The flow will be defined, the integrations and data discovered, and the work executed — all at runtime, in combinations that were never explicitly designed. The need for standardization and structure isn't smaller in the agentic world. It is exponentially higher.

The shift to open agent discovery is structurally similar to the shift to zero trust on the network. Just as a device's trust must no longer be defined by its location or network topology, an agent's, tool's, or resource's discoverability and use cannot be constrained by how it happens to be used today.

That may sound counter to framing agents in industry process definitions, but there is an important distinction. Business process descriptions define what must occur to meet a business need: before an order is accepted, you likely want to ensure that inventory or manufacturing schedules can meet the delivery date; you certainly want to agree on the price. Those constraints belong in the process.

Agent manifests, by contrast, use the standard definitions to declare capabilities.They use existing process definitions to do so, but they are composable in any manner. A separate control framework — outside the scope of this article — must determine, at runtime, whether a given composition is appropriate.

The standards give us a shared vocabulary for what an agent can do and the context to decide if they should. The control layer provides enforcement.

Analysts and researchers agree: agents are the next breakthrough in AI value, and adoption will be a disproportionate differentiator for the organizations that get it right.

They also agree that the value proposition requires open-ended discovery across organizational boundaries. McKinsey describes the emerging architecture as the agent mesh. Deloitte positions the control layer as an enabler, not a barrier.

The shift to agents is happening quickly, and governance is behind.

SADAR's standards-grounded manifests are designed to be the anchor for discovery, attribution, trust, and processing context. Local ontologies will help your internal agents with internal discovery but they become a barrier to interoperability. If they are truly ontologies rather than taxonomies, they likely contain graph relationships and bound semantics that will be expensive to maintain and that will constrain open discovery rather than enable it.

The agents are coming. The question is whether they will be able to talk toeach other.

The vocabulary already exists. We just have to use it.