.svg)

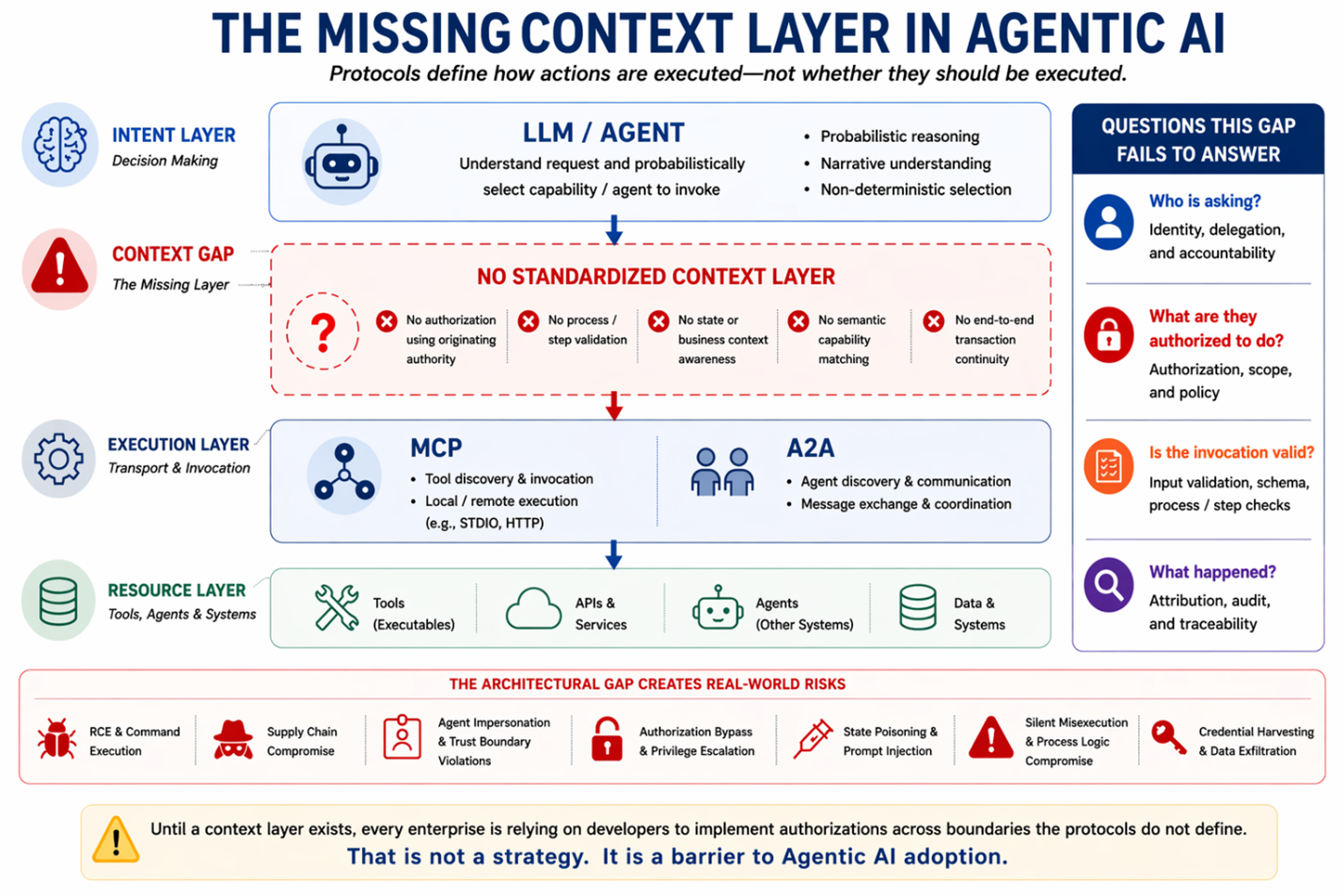

The fifteen Common Vulnerabilities and Exposure (CVEs) disclosed across MCP in the past twelve months (10 within the past 5 months) were not independent vulnerabilities. They were symptoms of a missing layer in the architecture. That missing layer is a barrier to the enterprise adoption of Agentic AI.

The same pattern appears in both MCP and A2A — not because either protocol is poorly engineered, but because both intentionally stop short of answering the most important question:

“Should this action happen at all?”

On April 15, 2026, OX Security published an advisory highlighting an issue with the de facto Agentic transport, MCP. The vulnerabilities exist in one of MCP’s communication methods, STDIO (standard input/output). This method provides an easy means for LLMs to send data to “tools” that immediately made tens of thousands LLM callable. The issue isn't in the transport, it is control.

MCP is explicitly a transport standard defining how data moves between the LLM and the tool. Verification, authentication, authorization, logging, and related controls are outside of the standard’s scope, by intent.

OX’s disclosure is one of several in a twelve-month span. Its ten vulnerabilities, combined with five previous adjacent CVEs, highlight this scope issue.

The OX vulnerability itself has two primary vectors:

The former is the more widespread vulnerability, affecting any of the 150M+ downloads of MCP and the approximately 200K exposed instances.

The latter is more tightly scoped but an indicator of a more fundamental problem — agent execution is only governed by the LLM’s probabilistic matching.

The ability to trick an agent into making a malicious change highlights the real problem.

Agent actions are not authorized at runtime in the context of:

Cursor, Claude Code, and VS Code addressed the MCP-config-modification case with a guardrail saying “you must get permission before modifying sensitive files.” That is the correct behavior but it only works because these platforms know the intent and user by their design.

The intent of a call made in these systems is an action within the broad context of software development. The user is known from the login. Adding a prompt before changing a defined list of files is straightforward because the system knows they are sensitive. As agents and tools become more generally discoverable, we can no longer infer intent because we don't know how, when, or why they may be discovered.

The intent context must be explicit.

The vision and value proposition for Agentic AI is open discovery of agents, tools, and resources for uses never-before-contemplated. Once achieved, humans and agents can open-endedly tackle tasks without being constrained by predefined UIs and workflows.

But there is a catch.

Our existing controls rely on the deterministic nature of our existing systems. Those systems are used by the same people in the same way every time. That is how we test, assign access, and audit. Allowing humans and agents to discover an undefined domain and range of agents dynamically shatters this foundation.

The missing layer provides that context in a standardized and durable way, allowing actions to be assessed at the time of invocation.

The Fintech Open Source Foundation is a neutral body where financial institutions collaborate on open standards. They have developed the FINOS AI Governance Framework establishing a baseline of AI controls.

In late 2025, FINOS published version 2.0 of its AIGF identifing six Agentic AI control requirements that directly capture these gaps:

Multi-agent trust boundary violations, agent authorization bypass, and tool chain manipulation map directly to the need for time-of-execution evaluation of context before allowing or denying a proposed action (and for assessing its results).

Supply chain compromise, persistence poisoning, and credential harvesting speak not to functions of the layer, but to how it must be designed and implemented.

The Agent-to-Agent (A2A) protocol defines:

Like MCP, A2A focuses on transport and invocation remaining silent on the MCP gaps behind the CVEs:

The Cloud Security Alliance’s MAESTRO threat model of A2A flagged Unauthorized Agent Impersonation as a primary risk last year. That risk is the A2A-surface version of exactly what OX is showing on the MCP surface. The manifestation differs.

A2A’s failure mode is misdirected delegation rather than subprocess injection but the architectural gap is identical. While it does not manifest as an immediate RCE, it enables misdirected execution, privilege escalation, and process-logic compromise.

The similarity of the issues inA2A to those in MCP underscores that the root cause is a missing layer.

Virtually every company has obligations; regulatory, compliance, audit, or to its customers, to demonstrate visibility,control, and explainability of its operations. Today, this obligation is met through tightly constraining model and agent choices, custom instrumentation, limited or rigid use cases, and a largely homogenous environment.

Even with these compromises, most organizations would struggle to fully meet the control obligations.

Constraining the architecture is not a substitute for control — it is a barrier to Agentic AI’s value.

Agentic processes sit squarely in the category of meaningful actions that compliance frameworks were built to govern. The architectural gap makes those obligations impossible to meet in a standardized, interoperable manner.

This is not an abstract risk. It is an inhibitor.

While general AI adoption for human assistance is firmly in the 80th percentile, Agentic AI adoption lags at less than 10%.

The CVEs are the security symptom. The low agent adoption is the business symptom. Both trace to the same missing layer.

Assistive AI is generally thought to aid productivity, but most companies struggle to understand exactly what value they are getting for their investment. The path to measurable value is much clearer when AI is performing complex business functions with high fidelity. This is the domain of agents.

Unfortunately, the use cases where agents take actions of consequence are simply ineligible for production as long as this gap exists. As agents become more prevalent and the discovery scope expands, this issue even impacts low-risk flows.

The risk of an agent flow isn't defined by what you intend it to do, but by what it can discover and could do.

The missing layer transcends:

That is why it must be independent.

It is a layer for agent activity across the enterprise, and must address these questions regardless of technical implementation:

Under what authority am I executing? When determining if an action should, or shouldn’t, be processed, you must know the originating scope of authority. Without knowing this, it is impossible to avoid privilege escalation and to properly assess the request.

Who is performing task? Every agent and tool needs an identity that is distinct from any human or system. However, this identity, must also establish provenance to its publisher and an immutable description of its capabilities. The core purpose of this identity is attribution - not for access control.

Why am I doing this? Every action requires context. If a tool is asked to delete a file,why? What is the intent of the overall process? If an agent is asked to place an order, what business process is being executed and is order placement even a valid step in that process?

Is the state appropriate for the requested action? Even if placing an order is part of a flow, am I placing the order before I agree ona price? The sequence of tasks in business and technical processes matters greatly. If prerequisites have not been satisfied, then the task should not be performed.

These are not MCP’s nor A2A’s to answer.

It is the answers to these questions, taken together, not independently, that support the decision to allow or deny an action.

Allow or Deney is only determinable at runtime with this insight. It cannot be defined ahead of time.

The missing layer must sit over the MCP/A2A transport protocols as well as the model, agentic platform, etc. Without this layer, vulnerabilities such as the ones captured in the CVEs emerge along with other, critical gaps in agent control. This problem will become even more acute as companies move past tightly defined agent-to-agent/agent-to-tool bindings. At the target state, agents and tools must be freely discoverable to be used in ways not previously considered.

Traditional controls rely on the deterministic and rigid structure of our existing programs. They allow for statically defining access to users and functions with the assurance that we know when, why, how, and by whom they will be used. That structure doesn’t exist with discoverable agents and tools.

These CVEs are the tip of the iceberg of agentic risks and vulnerabilities.

The missing control layer must transcend vendor and implementation boundaries. Failure to do so means controls break at those boundaries. Consider the implications of security standards like OAuth not transcending the landscape. That would mean a different authentication and authorization model at every environment, product, platform, etc. boundary with “bridges” translating and patching them together. This is the opposite of where we should go. Instead this layer must be:

It must federate. Sovereign operators running single-node deployments in restricted environments must be able to implement the full specification without calling a commercial service for any governance decision. Hyperscalers running at billion-invocation-per-day scale must implement the same specification with no degradation. Both deployments must interoperate when they need to, with identity, authorization, and attribution crossing trust boundaries cryptographically rather than terminating at them. Sovereign, locally-hosted deployment that federates globally with no cloud dependency is a first-class mode of the specification, not an edge case.

It must provide provenance and attribution. Agent and tool provenance must be clear and verifiable. Their declaration must beheld in a third-party trust with attribution embedded in cryptographic standards proving their origin and integrity. IDs must cryptographically declare this provenance as well as the definition of capabilities, costs, controls, sovereignty, and operational characteristics.

It must ground capabilities in a shared semantic vocabulary. Agent and tool capabilities and requirements must be defined not in developer-driven narrative but in existing industry terminology advertising the exact scope the capability produces, doesn’t produce, and declared prerequisites.

It must produce attribution that survives every boundary it crosses. The context, originating scope of authority, acting agent/tool, overall process, and step/task-level constraints must propagate throughout the call flow in a privacy-preserving, immutable record.

The context layer is necessary but not sufficient. The answers to these questions must be consumed and acted upon by an enforcement layer at runtime. That is a separate architectural concern, and enforcement implementations will vary with each deployment's policy model, regulatory posture, and operational scale. A standardized context layer provides this information in a normative structure that any implementation requires. The context layer must allow for extensions to support future and problem-specific needs.

For AI to realize its potential, it must be Trustworthy. That, in itself, is a rich topic that led to the formation of OpenSemantics.org with the mission to be an open community for the topic. Within OpenSemantics, the SADAR, Semantic Discovery and Attribution Registry project was formed under the stewardship of Cognita AI. The SADAR project is published under the Community Specification License 1.0 as outlined in its GitHub.

The design goals map onto the requirements above — neutral across environments, models/providers, and agent frameworks. It allows public and private federation with first-class support for sovereign deployment. The specification defines discovery, authorization, and execution, grounded in a semantic capability taxonomy. The execution is traceable producing cryptographically verifiable attribution chains that cross every boundary following the specification.

Vendors are beginning to market Agentic Control Planes but they do not interoperate and they fail to address the gaps clearly highlighted by the CVEs, FINOS, and MAESTRO.

The gap is real. The CVEs and market investment in closing it are the proof. A2A and MCP aren’t “broken”. They are staying in their lane. The industry must evolve to close this gap but should do so in a way that promotes interoperability vs vendor lock in.

Ignoring an architectural gap leaving agents vulnerable will lead to exploitation and audit issues ultimately serving as a barrier to Agentic AI adoption.

CVEs referenced in this article:

References

OX Security, “The Mother of All AI Supply Chains: Critical, Systemic Vulnerability at the Core of Anthropic’s MCP,” April 15, 2026. OX Security, “Technical Deep Dive,” April 15, 2026. OX Security, “MCP Supply Chain Advisory: RCE Vulnerabilities Across the AI Ecosystem,” April 15, 2026. The Hacker News, “Anthropic MCP Design Vulnerability Enables RCE, Threatening AI Supply Chain, ”April 21, 2026. The Register, “Anthropic won’t own MCP ‘design flaw’ putting200K servers at risk, researchers say,” April 16, 2026. Security Week, “‘By Design’ Flaw in MCP Could Enable Widespread AI Supply Chain Attacks,” April 2026. Ben Dickson, “The ‘by design’ security flaw of Model Context Protocol ,”Tech Talks, April 20, 2026. Cyata Security, “Three Flaws in Anthropic MCP Git Server, ”January 2026 (CVE-2025-68143, CVE-2025-68144, CVE-2025-68145). Check Point Research, “Caught in the Hook: RCE and API Token Exfiltration Through Claude Code Project Files,” February 2026 (CVE-2025-59536, CVE-2026-21852). GitHub Advisory Database, CVE-2026-25536 (MCP TypeScript SDK cross-client data leak),CVE-2026-33252 (Go SDK cross-site tool execution), CVE-2026-35568 (Java SDK DNS rebinding). Cloud Security Alliance, “Threat Modeling Google’s A2A Protocol with the MAESTRO Framework,” April 2025. Red Hat Developer, “How to enhance Agent2Agent (A2A) security,” August 2025. FINOS, “AI Governance Framework v2.0: Addressing Agentic AI Risks,” November 2025. Tetrate / FINOS, agentic AI risk extension, October 2025. NIST SP 800-162, “Guide to Attribute Based Access Control (ABAC) Definition and Considerations,” 2014. OASIS XACML specifications, 2003–present.